June 21, 2025 by Susanne Coates

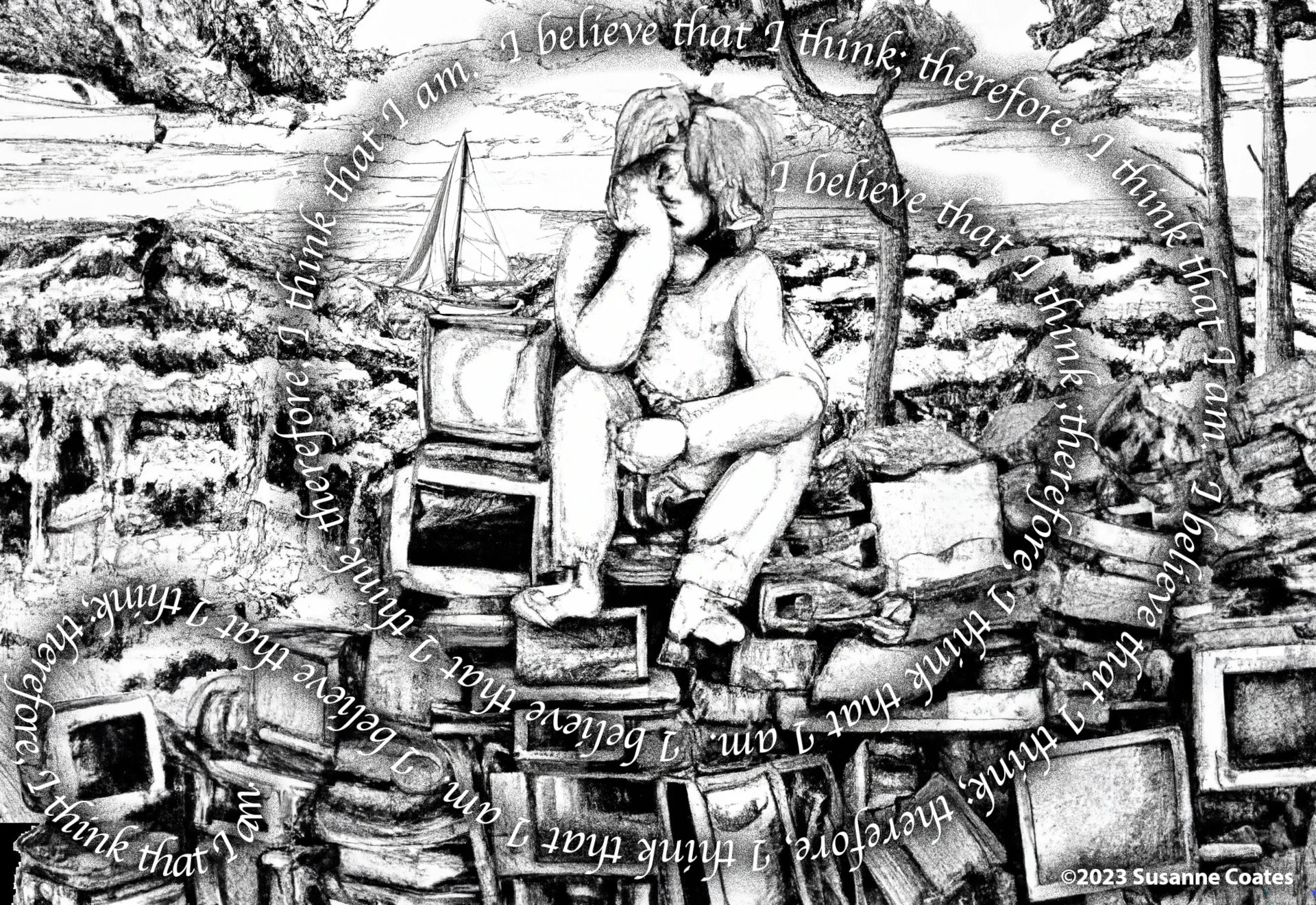

For as long as I can remember, the concept of consciousness has been a topic that has intrigued me. What is it? Is it a tangible thing that we can test? Is it a universal property of living entities? Or is it solely an inward-looking narrative we humans have woven together as a soothing balm for our anxiety over the nature of our existence? With the rapid progress that has been made in AI in recent years and the erosion of “consensus reality” that has led to stark ideological division in the US and abroad, I have found myself again pondering the human perception of reality and, specifically, the nature of consciousness.

Growing up I remember being told during one of my first fishing trips, something along the lines of, “Fish don’t have any feelings.” Implying that it was okay to hook and eat them because they were food and not thinking, feeling creatures. As we prepared them for cooking we had to remove the head and tail and scoop out the goopy entrails. The poor creatures would flap about trying to escape their gristly fate, but I was told, “it’s only reflex, they don’t know what’s happening to them.”

Some time later my pet cat was killed by a passing car. I was devastated, but I noticed that in the squashed nonsense that the car’s tyre had made of my beloved pet, there were the same goopy entrails, just like the fish. I had begun learning about human anatomy in grade school and that we have the same goopy things inside us. And so, my young self realized that the fish, the cat, and myself may not be so different after all and perhaps, the same held true for “feelings?”

As time went on and I grew to adulthood, I went to college and graduate school, learned more about the brain, how it processes information, and how we believe it creates our perception of the world around us. Over this extended period of my life, I began to believe that many species likely possess a stream-of-consciousness of some sort and might be, to one extent or another, self-aware. Thus, the whole “fish-don’t-have-feelings” line was really just a convenient thing for parents to tell their kids, to avoid explaining the “circle-of-life” —that explanation would come later in the form of a Disney movie. While, from a purely pragmatic standpoint, hunting and eating animals was absolutely necessary for the survival of our ancient ancestors—and still is for some people around the globe—it seems that encouraging kids to believe animals have “no feelings,” is to encourage an anthropocentric way of thinking about their relationship to the natural world. I wonder how much of our present predicament with species extinction can be traced back to early childhood experiences that taught some of us to devalue animals and by extension, the natural world? Anyhow, I digress.

Years ago, while completing my doctoral research that used artificial neural networks (ANNs) for biological pattern recognition, I began to wonder, as did others at the time, if a sufficiently large, complex ANN might exhibit some form of consciousness? This question has gained traction as advancements in artificial intelligence (AI) challenge our understanding of what it means to be ‘conscious.’ If we consider consciousness not merely as a trait of organic evolution but also as a possible outcome of complex informational processes, then AI systems might one day – perhaps very soon – exhibit some form of consciousness. In exploring this possibility, we must consider not only biological, but synthetic forms of consciousness, thus expanding our inquiry into whether machines could potentially experience, perceive, or interact with the world in ways that are fundamentally conscious. This perspective doesn’t just extend our contemplation of consciousness across nature–it also compels us to re-evaluate the boundaries between biological organisms and artificial systems.

In Kanaev, 2022 the author discusses integrating advances in anthropology and neuroscience to explore the adaptive value of human consciousness. The paper posits that human consciousness holds significant adaptive value in the context of evolution, emphasizing that a complex subjective reality – the multifaceted, personalized nature of how people experience the world around them – enhances the fitness of a species. This perspective challenges the notion that consciousness is merely a byproduct of cognitive evolution, instead proposing that it serves a crucial function in survival and adaptation. The paper argues that the intricate web of subjective experiences, emotions, and self-awareness allows humans to navigate complex social structures, make long-term plans, and adapt to changing environments. These capabilities are not just advantageous, but essential for the survival and prosperity of the species.

Extending these ideas, it seems plausible to suggest that varying degrees of consciousness also serve adaptive purposes in non-human animals tailored to the ecological niches they inhabit. For example, consider the social structures of certain primates like chimpanzees or bonobos. They engage in intricate social behaviours, such as alliance-building, conflict resolution, and even deception, [Hall, 2017] which could, in themselves, be indicative of a form of self-awareness and subjective experience (the personal, internal experience of thoughts, feelings, sensations, and perceptions). As with humans, social consciousness serves an adaptive function, allowing these primates to navigate the complexities of their social hierarchies more effectively, thereby increasing their chances of survival and reproduction.

Similarly, the problem-solving abilities and tool use observed in some bird species like crows or ravens [Osvath, 2018] could be seen as manifestations of a form of consciousness that serves an adaptive purpose. These birds have been observed using sticks to extract insects from tree bark [Rutz et al, 2016] and even using cars to crack open nuts [BBC, 2010]. Such behaviours indicate not just instinct but a level of problem-solving that suggests a form of subjective experience. This cognitive ability allows them to adapt to various environmental challenges, enhancing their ability to find food and evade predators.

In both these examples, the adaptive value of consciousness, or at least some form of it, seems evident. While the level and complexity of consciousness may vary across species, its presence serves a functional role that enhances survival and adaptability. This perspective aligns well with Kanaev’s argument, and extends it to suggest that consciousness is not a unique hallmark of humanity, but a widespread phenomenon across the animal kingdom, manifesting in various forms and serving adaptive functions tailored to the ecological and social demands of each species.

Historically, the concept of consciousness has been closely tied to human exceptionalism—the idea that humans are fundamentally different from and superior to all other forms of life. This perspective has been perpetuated through religious doctrines, philosophical treatises, and even certain scientific theories. For instance, in many religious traditions humans are often considered to be created in the image of a divine being, which confers upon them a special status above all other forms of life. This belief is exemplified in the Biblical account of creation in the Book of Genesis (1:25-28), where humans are given dominion over the earth and its creatures. Similarly, in Islamic teachings (Quran 6:165), humans are seen as Allah’s viceroys on earth, entrusted with the responsibility of stewardship over other life forms.

Philosophers like René Descartes believed that human beings possess a unique kind of rational soul, capable of thought and self-reflection, which animals lack. Descartes famously argued that while animals might exhibit behaviours that appear to be intelligent, they do so purely as automata, driven by mechanical instincts rather than conscious thought. This idea, prevalent in Decartes’ time, has influenced thinking well into the present day. How long did such thinking delay scientists in recognizing intelligence in non-human species because of the de facto belief that animals act only on instinct?

Early Artificial Intelligence (AI) research aimed to create machines that mimic human reasoning and problem-solving skills. Alan Turing, in his seminal 1950 paper “Computing Machinery and Intelligence,” [Turing, 1950] proposed what is now known as the Turing Test. This test measures a machine’s intelligence based on its ability to exhibit human-like conversational behaviour, reflecting the implicit belief that human-like behaviour is the benchmark for true intelligence. It’s both ironic and profoundly fitting that when it was announced that the first AI model had passed the Turing Test, I found myself at a conference at King’s College, Cambridge University —Turing’s alma mater. Ongoing developments in machine learning and neural networks have produced AI systems that can perform tasks previously thought to require human-like intelligence, such as interpreting natural language, writing computer programs, playing complex games like Go, and even creating art, music, and video.

Throughout history, humans have often struggled with the “other,” whether that otherness is defined by nationality, race, religion, or even species. The discovery (or emergence) of a non-human form of consciousness would represent the ultimate “other,” challenging not just social and ethical norms, but also fundamental beliefs about what it means to be sentient, to have a self. This could lead to something akin to “cognitive xenophobia” which might manifest in ways such as the outright rejection and fear of conscious non-human animals or AI, or more subtle forms of bias and discrimination.

In my opinion, the existential dilemma posed by the emergence of machine consciousness would be a far more direct challenge to this anthropocentric worldview and, even at this early juncture in the development of AI, is forcing many of us to reevaluate not just our place in the universe, but also the ethical frameworks we use to justify that position. Philosophers have long debated what constitutes conscious experience. Is it merely the act of perception, or does it also include self-awareness, intentionality, and subjectivity? And if it includes these latter elements, how can we ever hope to understand consciousness in beings that are fundamentally different from us—whether they be non-human animals, extraterrestrial life, or advanced AI systems?

Many species, including octopuses, bats, dolphins, and primates, are not only intelligent but also exhibit varying degrees of consciousness and self-awareness. Granted, an octopus isn’t going to learn calculus or cure cancer—then again neither will most humans—but they likely do possess intelligence and awareness, albeit different from our own. Not only do they respond to pain, exhibit a will to survive, reproduce, eat, and sleep like most living organisms, but they also possess memory, solve problems, and use tools. When held in captivity they are aware of their situation, and they wait for an opportune moment when their captors aren’t looking to attempt escape. There is a growing body of evidence suggesting that cephalopods are far more cognitively complex than previously thought [Sample 2017][Harmon, 2011]. However, smell, touch, and taste are their primary senses—instead of vision—making them difficult to assess for self-awareness with a widely used tool in cognitive science, the mirror test.

The mirror test, also known as the “mirror self-recognition test” or “mark test,” is a behavioural experiment designed to assess an animal’s capacity for self-awareness. Developed by psychologist Gordon Gallup Jr. [Gallup,1970], the test has become a cornerstone in the field of comparative psychology and cognitive science. It aims to determine whether an individual can recognize itself in a mirror, thereby demonstrating a level of self-awareness that goes beyond basic instinctual responses. The underlying premise is that the ability to recognize oneself in a mirror is indicative of higher cognitive functions, such as introspection and the conceptualization of “self.”

The test is conducted in a controlled environment where the subject animal is first given time to acclimate to the presence of a mirror. During this acclimation phase, the animal’s behaviour is observed to note any interactions with the mirror. Initially, many animals react to their reflection as if encountering another individual of their species, displaying social or aggressive behaviours. However, some animals eventually begin to display behaviours that suggest they understand the reflection to be an image of themselves. These behaviours may include self-directed actions like grooming parts of the body that are usually not visible without a mirror or inspecting themselves from various angles.

Once the subject appears to be accustomed to the mirror, the next phase of the test begins. This involves surreptitiously marking the animal with a non-toxic, odourless dye or sticker on a part of its body that is not directly visible without the aid of a mirror, such as the forehead or the side of the face. The animal is then observed to see if it notices the mark. Success in the test is typically defined as the animal using the mirror to guide its actions towards the mark, such as touching or attempting to remove it. This behaviour is interpreted as evidence that the animal recognizes the reflection as an image of itself, thereby demonstrating a level of self-awareness.

The mirror test has been administered to various species, including primates, dolphins, elephants, and corvids such as crows, ravens, and magpies [Anderson, 2011], [Reiss, 2001], [Plotnik, 2006], [Prior, 2008], [Emery, 2006]. Many humans are able to pass the mirror test by 24 months of age; however, failure to pass the test does not necessarily indicate a lack of self-awareness but, as critics argue, could be attributed to other factors, such as lack of interest in the mark or cultural factors [SciAm,2010]. Despite these criticisms, the mirror test remains a widely used tool for studying cognitive abilities in both humans and non-human animals.

Recent field research has also highlighted the remarkable cognitive abilities of non-human primates. A male Sumatran orangutan, for example, was observed treating a facial wound using the biologically active plant Fibraurea tinctoria [Laumer, 2024]. This self-medicative behaviour, the first of its kind systematically documented in wild orangutans, involved the orangutan chewing the leaves and applying the juice directly to his wound. This suggests not only an understanding of the medicinal properties of specific plants but also a form of self-care and problem-solving indicative of a higher level of consciousness. The plant used is known for its antibacterial, anti-inflammatory, and analgesic properties, underscoring the orangutan’s advanced use of natural resources for health management.

Findings from both laboratory testing and field observations broaden our understanding of self-awareness in animals, illustrating that the roots of tool use, rudimentary medical knowledge, and self-care practices might extend further back into our shared evolutionary ancestry. Moreover, such behaviours point to an intricate level of cognitive functioning and environmental interaction that parallels early human practices, providing deeper insights into the origins and survival necessity of consciousness.

In addition to self-awareness there is also what’s known as meta-self-awareness or simply meta-awareness which involves the internal recognition of how one’s own thoughts and feelings are perceived and processed. Meta-awareness is what allows you to analyse why you think or feel a certain way and allows you to recognize patterns in your own behaviour. It is thought to be the cognitive process underlying self-evaluation, personal growth, and emotional regulation and a key component of mindfulness—the practice of actively paying attention to your thoughts and feelings without judging them. In a nutshell, meta-awareness is what allows us to observe and understand our own consciousness and navigate our interactions with the world around us, but it is not well understood in humans and even less so in non-human animals.

Certain problems cannot be solved by any algorithm… Applying this to the human brain suggests that some aspects of our cognition may forever remain “uncomputable”

Understanding the human mind is analogous to a system trying to understand itself—a pursuit that brings to mind Alan Turing’s computability theory and the Halting Problem [Turing, 1937]. Turing machines, which are theoretical constructs used to formalize the concept of computation, further elucidate these limitations. They reveal that certain problems cannot be solved by any algorithm, implying inherent computational boundaries. Applying this to the human brain, viewed as a biological “computer,” suggests that some aspects of our consciousness or cognition may forever remain “uncomputable” or elusive, obscured by the very nature of how we process information.

This notion is vividly illustrated by the “halting problem,” introduced by Turing. It states that it is fundamentally impossible for an algorithm to predict whether another algorithm, or itself, will eventually cease running or continue indefinitely. This concept has direct parallels in how we might attempt to understand the human mind: Just as an algorithm cannot predict its own conclusion in all scenarios, our minds might not foresee or comprehend all possible cognitive states or conclusions.

Consider an analogy: Imagine you are an astronomer tasked with confirming whether the universe has a finite number of stars. Using your primitive telescope, you observe and count the stars, but acknowledge that regions exist which are beyond your current observational capabilities. As technology advances and telescopes improve, you can see deeper into space and detect more stars. Each technological advance reveals more of the universe, yet also expands the boundaries of the known cosmos. Similarly, even if our cognitive ‘telescopes’—tools and methods in neuroscience and psychology—improve, they may continually uncover further complexities within the mind, suggesting that full comprehension remains just out of reach.

The Halting Problem suggests there are aspects of computational and cognitive processes that are fundamentally unanswerable or unknowable. However, while complete understanding may be beyond our grasp, partial insights remain incredibly valuable. Advances in neuroscience, psychology, and artificial intelligence continually expand our understanding of cognition, emotion, and consciousness. Although we might never fully “solve” the mind, the knowledge we gain is crucial, enabling practical applications such as the treatment of mental health disorders and the development of sophisticated AI systems.

It seems likely that consciousness, self-awareness, and meta-awareness exist on a spectrum. Why? Because this view aligns with what we know about biological systems. If we consider the structural and functional commonalities across species, it becomes evident that the brain’s architecture—the foundation for conscious experience—shares fundamental similarities across many animals. Humans, like apes, dogs, and mice, possess neurons, synapses, and similar neurochemical processes. The primary difference lies in the complexity and arrangement of these systems. As we ascend the phylogenetic tree, we see not a fundamental change in kind but an increase in complexity, leading to, ostensibly, more sophisticated cognitive functions.

Furthermore, this concept of interconnectedness and communication extends beyond animals. Consider plants: although they lack nervous systems, they still exhibit forms of signalling that resemble communication. Through chemical interactions and mycorrhizal networks [Beiler, 2009], entire ecosystems, like forests, are interlinked, exchanging nutrients [Simard, 1997] and, perhaps, information in a manner that is reminiscent of neural networks. Though the debate rages on regarding the wood-wide-web, these mycorrhizal associations suggest that there are rudimentary forms of responsiveness embedded in the living systems of the planet.

Thus, it could be that every living thing, from the smallest microbe, the tallest tree, the most advanced human, A.I., or some other form of life out in the cosmos we have yet to meet, occupies a place on this spectrum. While we know that one end of the spectrum begins at a fundamental state of non-consciousness the other end, like the number of stars in the sky, is a value yet to be determined, and is, possibly, not determinable. Thus, we are not separate or superior; we are simply further along the spectrum than some other forms of life. As we continue to explore this concept, I expect that we will find that other species, such as whales, dolphins and higher primates, are closer to us on this spectrum than we previously thought. With the advent of artificial intelligence and its application in decoding animal communication [Bushwick, 2023][Sharma, 2024], we may find our notions of human cognitive primacy challenged.

if we end up causing our own extinction… then how smart were we really?

I, like others, suspect that life in all its forms, is an emergent property of the universe and it arises whenever conditions are favourable, given enough time. However, the only proof I can offer – until we actually discover extraterrestrial life – is to point out that on this planet life seems to exist almost everywhere we look. From the darkest, crushing depths of the oceans, to scorching hot volcanic vents, to under the ice of the poles, to the hottest, dryest desert—life on Earth seems to find a way. So, I hold out the hope that as we explore our own solar system, life of some kind has found a way “out there” and that Enrico Fermi’s famous question will be, at least partially, answered in my lifetime [Sierra, 2016]. Like life, consciousness is also an emergent property that only needs favourable conditions be they biological or computational – in the case of AI. Thus, as life evolves, it moves up the consciousness spectrum. While intelligent life existing in a technological civilization may be rare in the universe, it does not imply superiority or a greater right to existence than say the western lowland gorilla that we are currently hunting to extinction. In fact, if we end up causing our own extinction because of climate change, nuclear war, god-like AI, genetic engineering gone awry, or some other thing – then how smart were we really?

In exploring the concept of consciousness in artificial intelligence, a recent paper by Butlin et al [Butlin et al, 2023] examines the potential for gradations of consciousness in AI systems. The authors argue that consciousness may exist in varying degrees rather than being an absolute state, suggesting that AI systems might exhibit traits indicative of different levels of consciousness. This idea parallels the perspective discussed here, suggesting that consciousness is a universal, emergent property arising under favourable conditions. Furthermore, Butlin touches upon what others have suggested in animals, that consciousness exists on a spectrum, from basic interaction with the environment to a comprehensive self-understanding.

Reflecting on the historical notion of consciousness, one might question if it’s a narrative constructed for comfort, that is, was Descartes’ “Cogito, ergo sum” merely an arrogant solace to elevate us above other life forms. Perhaps. It seems more likely that consciousness is a shared attribute across all living beings, manifesting in various forms and intensities. Such realizations have profoundly evolved my own understanding of consciousness over my lifetime, leading me to recognize that traits like consciousness, self-awareness, and meta-awareness are unlikely to be unique to humans. They likely exist on a spectrum, an evolutionary ladder of awareness if you will, that includes all life forms and could extend to synthetic entities. Our position on this spectrum doesn’t imply superiority, but instead imparts greater responsibility. From fish to felines to humans, we are all interdependent threads in the complex tapestry of existence. Recognizing this interconnectedness should not only foster a greater sense of kinship with our fellow inhabitants of this planet—be they organic or synthetic—but also inspire us to be better stewards of the world we all share.

References

[Anderson, 2011]

Anderson, J. R., & Gallup, G. G. (2011). Which primates recognize themselves in mirrors?. PLoS biology, 9(3), e1001024. [Link](https://journals.plos.org/plosbiology/article?id=10.1371/journal.pbio.1001024)

[BBC, 2020]

BBC Four. (2010, May 17). The Life of Birds, The Limits of Endurance, Red light runners. BBC. https://www.bbc.co.uk/programmes/p007xvww

[Beiler, 2009]

Beiler, Kevin & Durall, Daniel & Simard, Suzanne & Maxwell, Sheri & Kretzer, Annette. (2009). Architecture of the wood-wide web: Rhizopogon spp. genets link multiple Douglas-fir cohorts. The New phytologist. 185. 543-53. 10.1111/j.1469-8137.2009.03069.x.

[Bushwick, 2023]

Bushwick, S. (February 7, 2023). How scientists are using AI to talk to animals. Scientific American. https://www.scientificamerican.com/article/how-scientists-are-using-ai-to-talk-to-animals/

[Butlin et al, 2023]

Butlin, P., Long, R., Elmoznino, E., Bengio, Y., Birch, J., Constant, A., … VanRullen, R. (2023). Consciousness in Artificial Intelligence: Insights from the Science of Consciousness. arXiv:2308.08708 [cs.AI].

[Emery, 2006]

Emery, N. J. (2006). Cognitive ornithology: the evolution of avian intelligence. Philosophical Transactions of the Royal Society B: Biological Sciences, 361(1465), 23-43. [Link](https://royalsocietypublishing.org/doi/10.1098/rstb.2005.1736)

[Gallup,1970]

Gallup, G. G., Jr. (1970). Chimpanzees: Self-Recognition. Science, 167, 86-87.

[Hall, 2017]

Hall, K., & Brosnan, S. F. (2017). Cooperation and deception in primates. Infant Behavior and Development, 48(Pt A), 38-44. DOI: 10.1016/j.infbeh.2016.11.007

[Harmon, 2011]

Harmon, K. (2011, September 27). The mind of an octopus. Scientific American. https://www.scientificamerican.com/article/the-mind-of-an-octopus/

[Jabr, 2012]

Jabr F. (August 22, 2012) . Does self-awareness require a complex brain? Scientific American Blog. https://blogs.scientificamerican.com/brainwaves/does-self-awareness-require-a-complex-brain/

[Kanaev, 2022]

Kanaev, I. A. (2022). Evolutionary origin and the development of consciousness. Neuroscience & Biobehavioral Reviews, 133, 104511. https://doi.org/10.1016/j.neubiorev.2021.12.034

[Koerth-Baker, 2010]

Koerth-Baker, M. (November 29, 2010). Kids (and Animals) Who Fail Classic Mirror Tests May Still Have Sense of Self. Scientific American. Retrieved from https://www.scientificamerican.com/article/kids-and-animals-who-fail-classic-mirror/

[Laumer, 2024]

Laumer, I.B., Rahman, A., Rahmaeti, T. et al. Active self-treatment of a facial wound with a biologically active plant by a male Sumatran orangutan. Sci Rep 14, 8932 (2024). https://doi.org/10.1038/s41598-024-58988-7

[Osvath, 2018]

Osvath, M., & Kabadayi, C. (2018). Ravens parallel great apes in flexible planning for tool-use and bartering. Science, 357(6347), 202-204. DOI: 10.1126/science.aam8138

[Plotnik, 2006]

Plotnik, J. M., de Waal, F. B., & Reiss, D. (2006). Self-recognition in an Asian elephant. Proceedings of the National Academy of Sciences, 103(45), 17053-17057. [Link](https://www.pnas.org/content/103/45/17053)

[Prior, 2008]

Prior, H., Schwarz, A., & Güntürkün, O. (2008). Mirror-induced behavior in the magpie (Pica pica): evidence of self-recognition. PLoS biology, 6(8), e202. [Link](https://journals.plos.org/plosbiology/article?id=10.1371/journal.pbio.0060202)

[Reiss, 2001]

Reiss, D., & Marino, L. (2001). Mirror self-recognition in the bottlenose dolphin: A case of cognitive convergence. Proceedings of the National Academy of Sciences, 98(10), 5937-5942. [Link](https://www.pnas.org/content/98/10/5937)

[Rutz et al, 2016]

Rutz, C., Klump, B., Komarczyk, L. et al. Discovery of species-wide tool use in the Hawaiian crow. Nature 537, 403–407 (2016). https://doi.org/10.1038/nature19103

[Sample 2017]

Sample, I. Alien intelligence: The extraordinary minds of octopuses and other cephalopods. The Guardian, 2017, March 28

https://www.theguardian.com/environment/2017/mar/28/alien-intelligence-the-extraordinary-minds-of-octopuses-and-other-cephalopods

[SciAm 2010]

Koerth-Baker, M. Kids (and Animals) Who Fail Classic Mirror Tests May Still Have Sense of Self, Scientific American, November 29, 2010. https://www.scientificamerican.com/article/kids-and-animals-who-fail-classic-mirror/

[Sharma, 2024]

Sharma, P., Gero, S., Payne, R. et al. Contextual and combinatorial structure in sperm whale vocalisations. Nat Commun 15, 3617 (2024). https://doi.org/10.1038/s41467-024-47221-8

[Sierra, 2016]

Sierra, L. (May 19, 2016), Are we alone in the universe? Revisiting the Drake equation. NASA Exoplanet Exploration. https://exoplanets.nasa.gov/news/1350/are-we-alone-in-the-universe-revisiting-the-drake-equation/

[Simard, 1997]

Simard, Suzanne & Perry, David & Jones, Melanie & Myrold, David & Durall, Daniel & Molina, Randy. (1997). Net transfer of C between ectomycorrhizal tree species in the field. Nature. 388. 579-582. 10.1038/41557.

[Stanford, 2008]

Stanford Encyclopedia of Philosophy: René Descartes, https://plato.stanford.edu/entries/descartes/

[Turing, 1950]

A. M. Turing, I.—COMPUTING MACHINERY AND INTELLIGENCE, Mind, Volume LIX, Issue 236, October 1950, Pages 433–460, https://doi.org/10.1093/mind/LIX.236.433

[Turing, 1937]

Turing, A.M. (1937), On Computable Numbers, with an Application to the Entscheidungsproblem. Proceedings of the London Mathematical Society, s2-42: 230-265. https://doi.org/10.1112/plms/s2-42.1.230